# When filebeat runs as non-root user, this directory needs to be writable by group (g+w). # data folder stores a registry of read status for all files, so we don't send everything again on a Filebeat pod restart

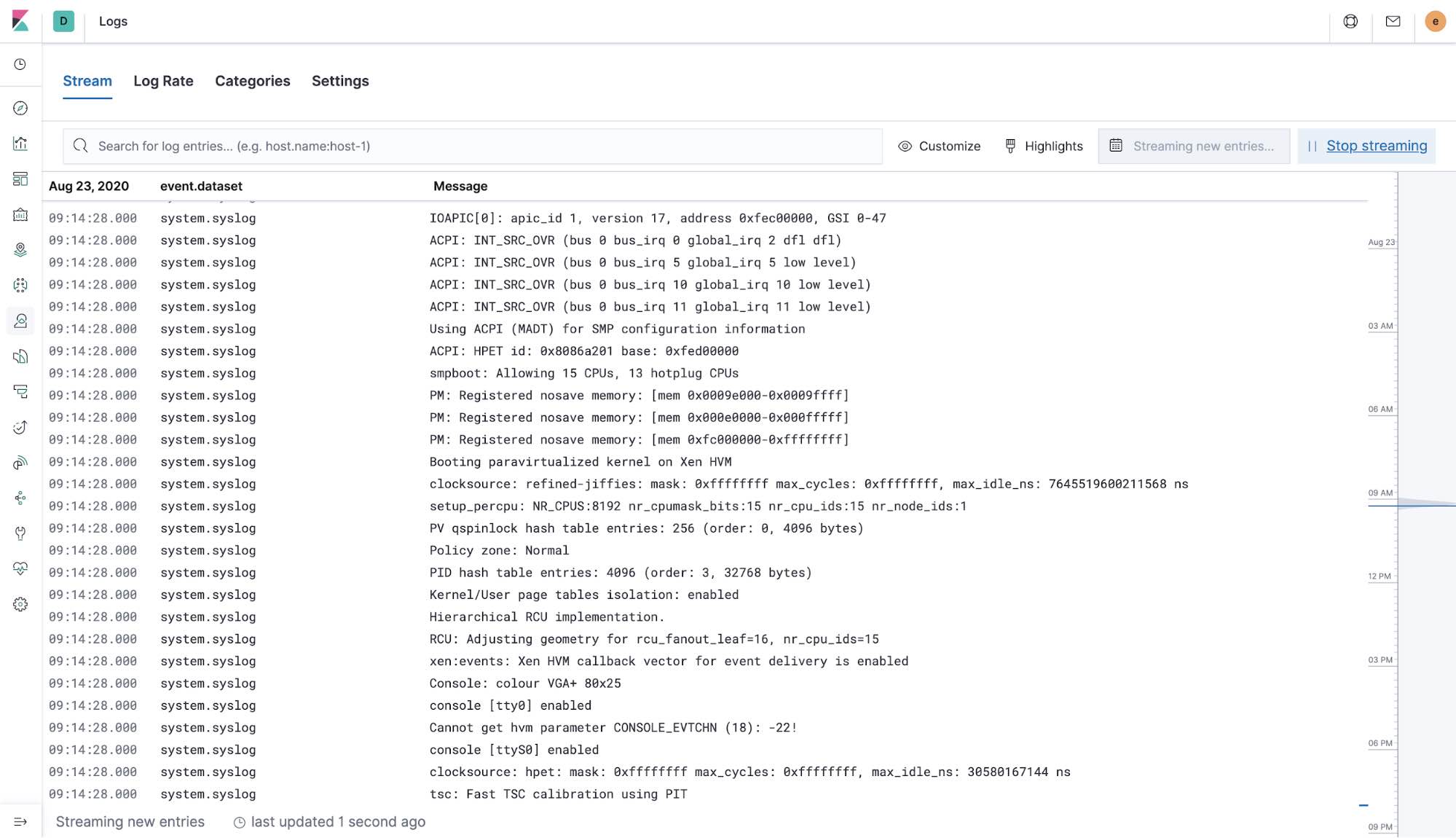

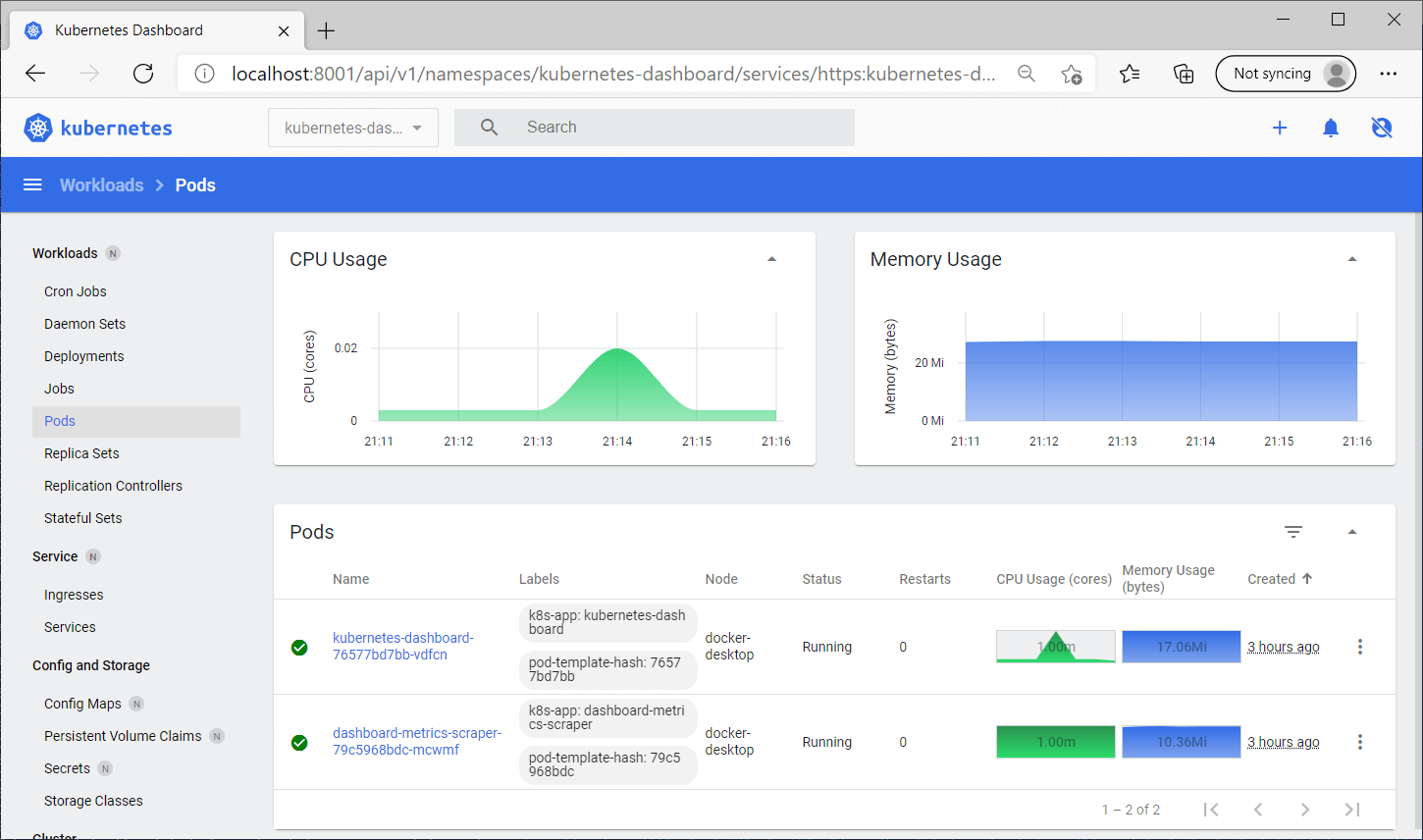

MountPath: /etc/filebeat-application2.yml MountPath: /etc/filebeat-application1.yml # If using Red Hat OpenShift uncomment this: # To enable hints based autodiscover, remove `filebeat.inputs` configuration and uncomment this: Therefore, I get log only from application2.Ĭan you please help me about how to feed two filebeat configuration files into /beats/filebeat DaemonSet? or how to config two "todiscovery:" rules with 2 separate "output.logstash:"?īelow is my complete filebeat-kubernetes-whatsapp.yaml. Image: /beats/filebeat:7.10.1īut only /etc/filebeat-application2.yml is affected. I create 2 filebeat.yml(filebeat-application1.yml and filebeat-application2.yml) files in configMap and then feed both files as args in DaemonSet(/beats/filebeat:7.10.1) as below. Therefore I deploy a filebeat DaemonSet on the Kubernetes cluster to fetch the logs from my applications(Application1, Application2) and run logstash service on the instance where I want to save the log files(outside of the Kubernetes cluster). I would like to collect the logs from my applications from outside of the Kubernetes cluster and save them in different directories(for eg: /var/log/application1/application1-YYYYMMDD.log and /var/log/application2/application2-YYYYMMDD.log). We can see logs of container logged in the Elastic search.I have 2 applications (Application1, Application2) running on the Kubernetes cluster. If we go to discover tab in Kibana we will find the following output: Now, to deploy the logstash use the following command: helm install elk-filebeat elastic/filebeat -f values-2.yaml Verify ELK installation Create a file values-2.yaml with the following content: daemonset: Now, we will create a custom values file for Logstash helm chart. Now to deploy the logstash, execute the following command: helm install elk-logstash elastic/logstash -f values-2.yaml Deploy the filebeat Create a file values-2.yaml with the following content: persistence: To verify the kibana is working fine, use the ingress host on browser. Now, to deploy the helm chart use the command: helm install elk-kibana elastic/kibana -f values-2.yamls Create a file values-2.yaml with the following content: elasticsearchHosts: " ingress: Now, we will create a custom values file for Kibana helm chart. To verify the elastic search is working fine, use the ingress host on browser. Now to deploy the elastic search, execute the command: helm install elk-elasticsearch elastic/elasticsearch -f values-2.yaml -namespace logging -create-namespace Now execute the following commands to add the Elastic Search helm repo: helm repo add elastic host: es-elk.s9.devopscloud.link #Change the hostname to the one you need Create a file, values-2.yaml with the following content: replicas: 1 Be sure to deploy the ingress controller beforehand. Deploy Elastic Searchįirst we will create a values file which will expose the elastic search using ingress. Now let us deploy each and every component one by one. It exports and forwards the log to Logstash. Filebeat: Filebeat is very important component and works as the log exporter.It ingests data(logs) from various sources and processes them before sending to Elastic Search Logstash: Logstash is data ingestion tool.

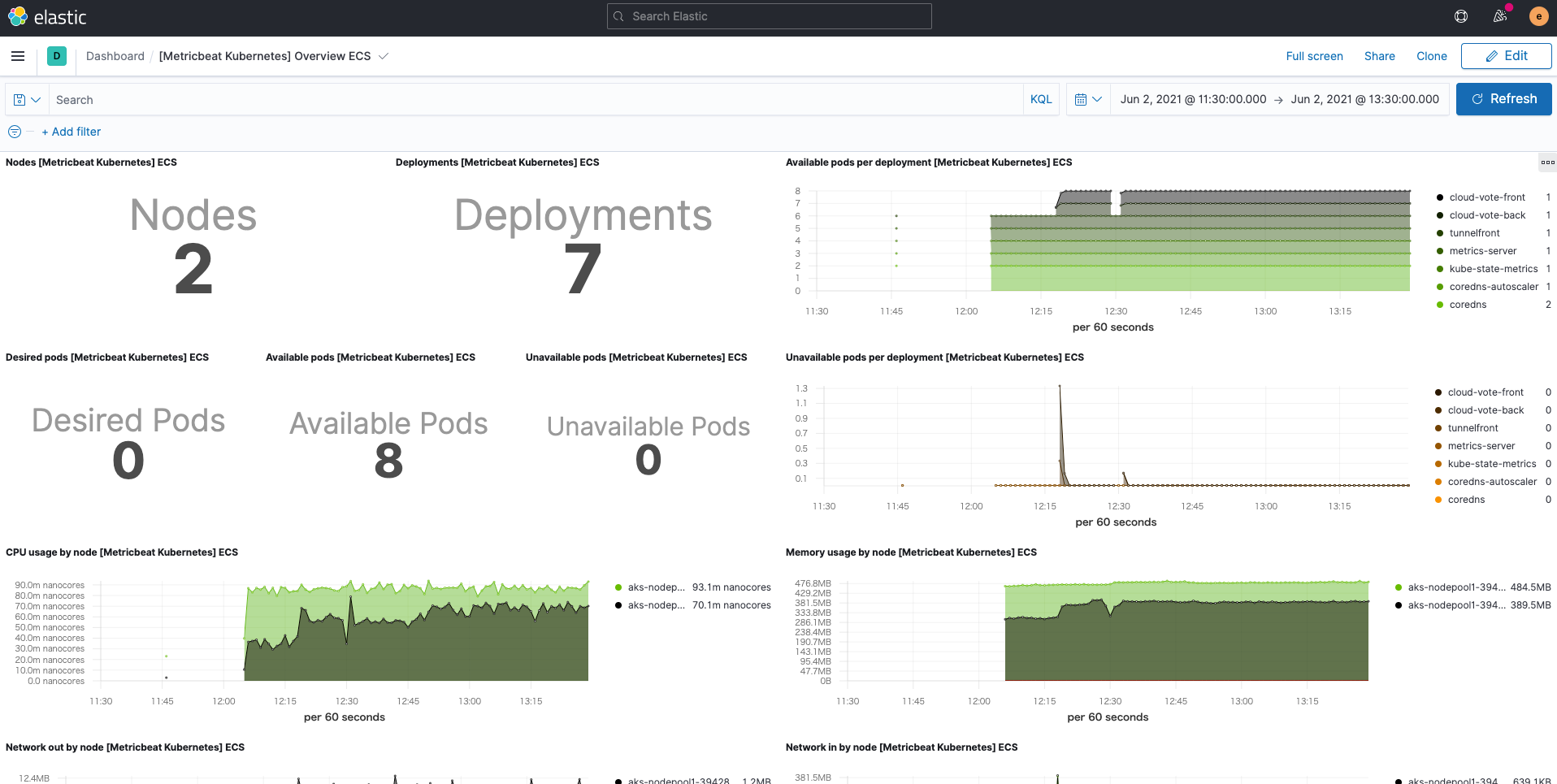

You can use the Cosign application to verify the Filebeat Docker image signature. Obtaining Filebeat for Docker is as simple as issuing a docker pull command against the Elastic. Kibana: Kibana is the visualization platform and we can use Kibana to query Elastic Search Run Filebeat on Docker edit Pull the image edit.Elastic Search: This is the database which stores all the logs.ELK stack helps to aggregate these logs and explore through those logs. Rise of micro-service architecture demands better way of aggregating and searching through logs for debugging purpose. It uses limited resources, which is important because the Filebeat agent must run on every server where you want to capture data. The main purpose of this is to aggregate logs. ELK stack consists of Elastic Search, Kibana, Logstash.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed